|

The conventional proximal gradient algorithm. Show that convergence can be made, on average, considerably faster than that of We further establish the rate of convergence and present a simple and usefulīound to characterize our proposed optimal damping sequence. which corresponds to a single proximal gradient descent step of (3).

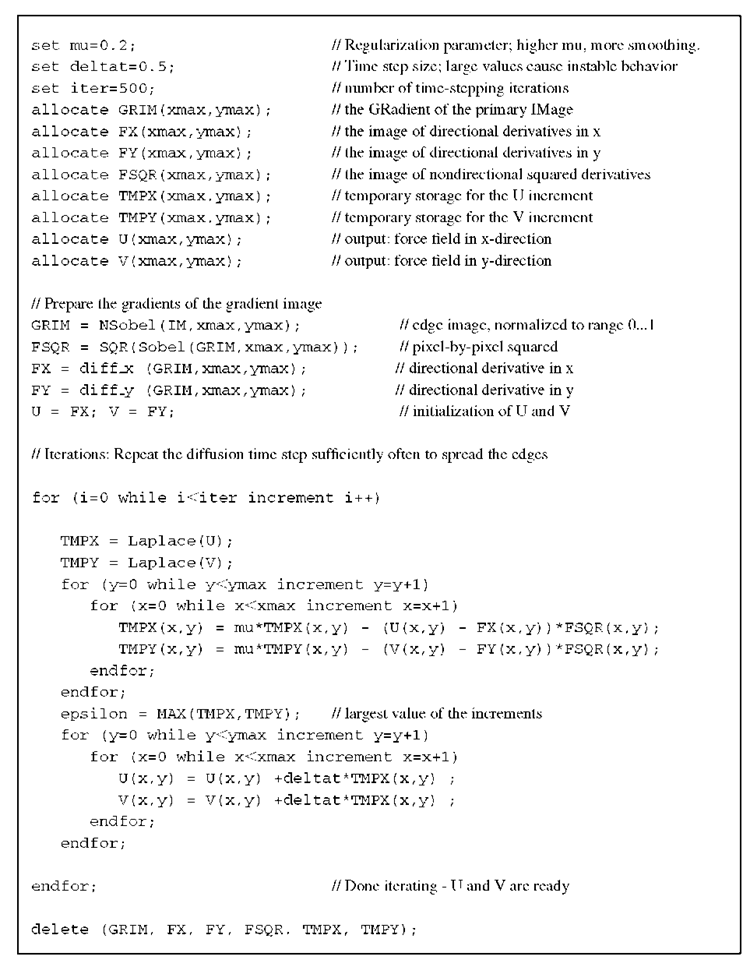

In thisĪrticle, we propose a hyperparameter setting based on the complexity upperīound to accelerate convergence, and consider the application of this nonconvexĪG algorithm to high-dimensional linear and logistic sparse learning problems. A further survey of nonconvex regularizers for sparse recovery can be found in 25. How different selection can affect convergence of the algorithm. Aside from some generalĬonditions, there is no explicit rule for selecting the hyperparameters, and Accelerated Gradient Methods for Sparse Statistical Learning with Nonconvex Penalties. Hyperparameters for its practical application. The proposed algorithm requires specification of several A recent proposal generalizes Nesterov's AG method to the the approximate matrix X, we update the vector t in each iteration by employing a proximal gradient method using a homotopy strategy, which is similar to the. penalty proximal-algorithms inverse-problems convex-optimization loss-functions linear-operators cost-function.

LASSO, convergence issues may arise when it is applied to nonconvex penalties, Pycsou is an open-source computational imaging software framework for Python providing highly optimised and scalable general-purpose computational imaging functionalities and tools, easy to reuse and share across imaging modalities. The general proximal gradient algorithm is designed to solve the problem in.

While AG methods perform well for convex penalties, such as the The formulation of basis pursuit relax the linear constraint of P1 in the. Objective functions comprising two components: a convex loss and a penaltyįunction. sparse and the active submatrix is well conditioned (e.g., when Ahas RIP), local linear convergence can be established LT92, HYZ08, and fast convergence in the nal stage of the algorithm has also been observed Nes07, HYZ08, WNF09. The per-iteration cost of the proposed algorithm is dominated by three matrixvector multiplications and the global convergence is guaranteed by results in. Download a PDF of the paper titled Accelerated Gradient Methods for Sparse Statistical Learning with Nonconvex Penalties, by Kai Yang and 2 other authors Download PDF Abstract: Nesterov's accelerated gradient (AG) is a popular technique to optimize

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed